A new machine is supposed to remove headaches, not create them. Yet many plant managers have lived through the same sequence. The skid lands on the floor, electrical and controls teams start hookup, and then the surprises begin. An HMI screen doesn’t match the approved sequence. An alarm never clears. A sensor tag is wrong. The paperwork is incomplete. By the time the vendor is pulled back into the conversation, your install window is already burning down.

That’s why factory acceptance test software matters. It isn’t just a nicer way to run a pre-shipment checklist. It’s a practical way to prove that the equipment, controls, documentation, and evidence package are ready before the system leaves the builder’s floor. For small to mid-sized manufacturers, that matters just as much on a semi-automated cell or custom fixture as it does on a large integrated line, because the business impact of one delayed startup is often disproportionate.

Table of Contents

- Beyond the Punch List Why FAT Matters More Than Ever

- What Factory Acceptance Test Software Really Does

- Core Features That Drive Efficiency and Compliance

- Navigating GMP Compliance and Validation

- Choosing the Right FAT Software Vendor

- Implementation Best Practices for Maximum ROI

- Common Pitfalls and How to Avoid Them

Beyond the Punch List Why FAT Matters More Than Ever

A weak FAT usually doesn’t fail in an obvious way. It passes on paper, the machine ships, and the critical test happens during commissioning when your production team is waiting. That’s when a missed I/O check turns into wiring confusion, an unchecked recipe condition becomes a controls issue, and a vague deviation note forces everyone to reconstruct what happened weeks earlier.

On older projects, teams could sometimes absorb that pain because the machine itself was simpler. That’s less true now. Equipment increasingly includes connected devices, software-dependent logic, data collection, robotics, and tighter compliance expectations. According to Factory Acceptance Testing market projections, the FAT market is projected to grow from USD 2.89 billion in 2025 to USD 5.20 billion by 2033, driven by Industry 4.0 technologies like IoT and AI that require more rigorous pre-installation validation.

That trend matches what plant teams feel on the ground. More complexity means more ways to be “mostly ready” and still lose days during startup.

A late software issue is rarely just a software issue. It becomes a scheduling issue, a labor issue, and often a documentation issue.

For that reason, FAT should be treated as a project control point, not just a release gate. The right software gives structure to the process before the witness day starts. It ties the user requirement to the test step, captures evidence while the machine is running, and records deviations in a way people can act on.

That matters for large capital projects, but it’s just as valuable on smaller upgrades. A semi-automated station may have fewer moving parts than a full line, yet the tolerance for ambiguity is often lower because smaller teams don’t have spare engineering bandwidth to clean up preventable startup issues.

What Factory Acceptance Test Software Really Does

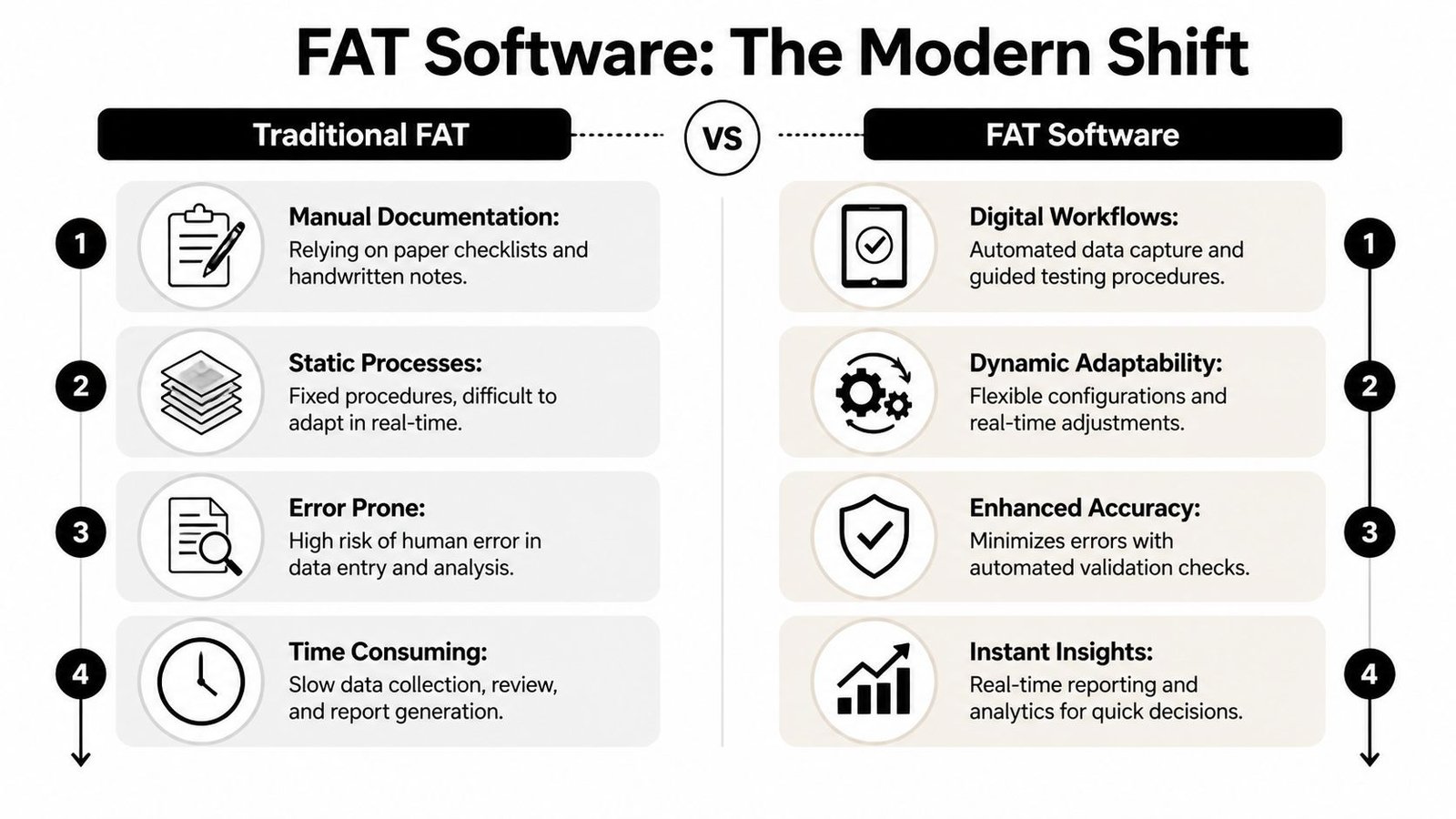

Paper FATs aren’t only slow. They create blind spots. One person marks up a printed protocol, another stores photos on a phone, someone else updates an issue list in a spreadsheet, and the final report becomes a manual assembly job. The process can still work, but only if the team is disciplined and lucky.

Modern factory acceptance test software replaces that fragmented workflow with a single controlled record. The protocol, evidence, comments, approvals, and deviations live in one place. It’s the difference between driving with a paper map and using live navigation that updates as conditions change.

A good system starts before test day. Engineers define the protocol from the approved design basis, user requirements, and functional expectations. During execution, technicians and witnesses record each result directly against the test step. If something fails, the deviation is logged at that moment, linked to the exact function, and routed for disposition instead of being buried in handwritten notes.

Teams that want a practical view of the FAT process itself can compare software workflows against a standard factory acceptance testing approach. The software doesn’t replace engineering judgment. It enforces it more consistently.

What changes in practice

The biggest shift is that the FAT becomes data-driven instead of document-driven. That sounds abstract until you see the day-to-day effect:

- Test execution becomes guided: Operators follow structured steps instead of bouncing between procedures, prints, and side notes.

- Evidence is attached in context: Photos, screenshots, calibration proof, and comments stay tied to the exact check being performed.

- Deviation handling gets cleaner: The team can assign, review, and close issues without rebuilding the story after the fact.

- Reporting stops being a separate project: The report is assembled from the live record instead of being recreated manually after everyone goes home.

Practical rule: If your FAT package depends on someone remembering where the screenshots were saved, you don’t have a controlled process.

The software also changes witness FAT dynamics. Customers, quality, controls, and operations all work from the same current protocol. That reduces the usual friction around version confusion, missing attachments, and unsigned changes. It also makes remote review more realistic when travel or timing gets tight.

The best systems don’t feel flashy. They feel boring in the right way. The process is clear, evidence is easy to retrieve, and there’s less room for informal workarounds that create trouble later.

Core Features That Drive Efficiency and Compliance

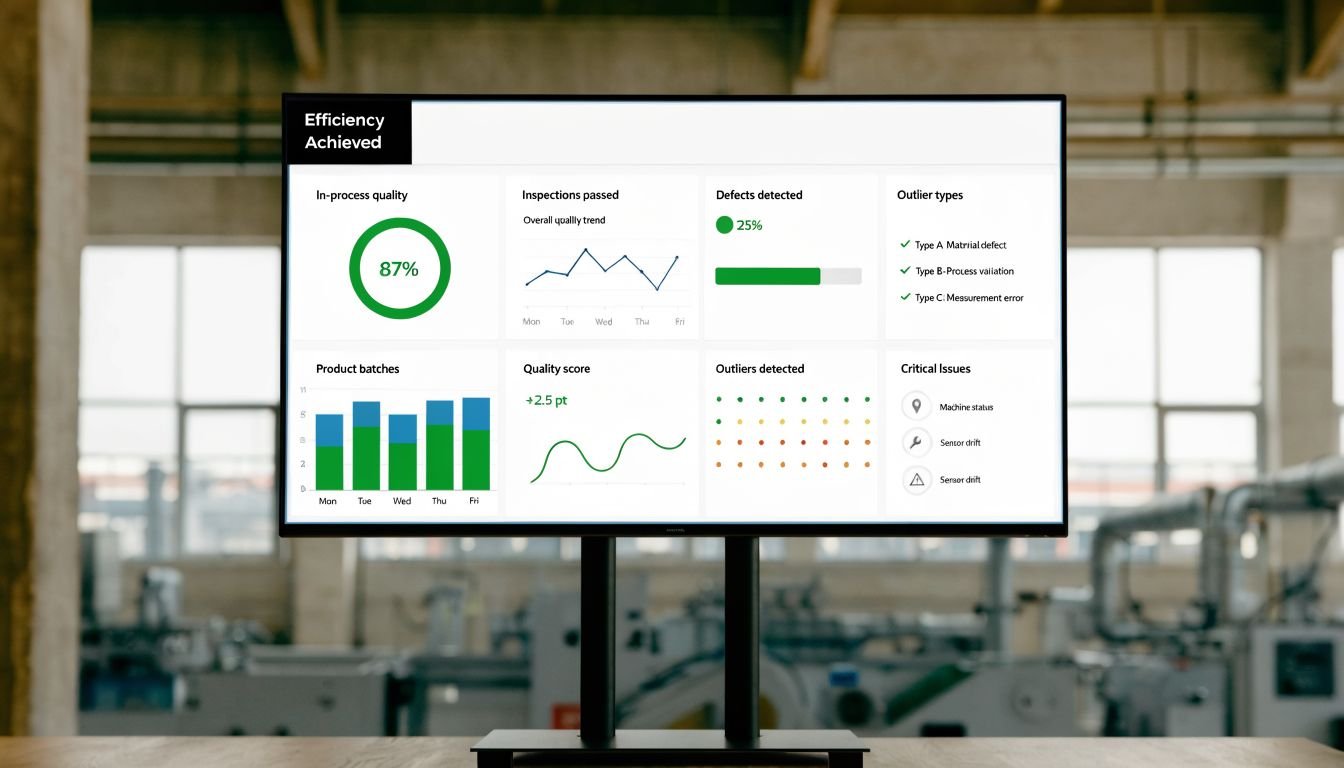

The value of factory acceptance test software doesn’t come from a long feature list. It comes from a short list of capabilities that remove common failure points from FAT execution. A well-executed FAT process supported by dedicated software typically uncovers 70-80% of functional defects before equipment shipment, helping avoid on-site fixes that can exceed 10-15% of total project budget, according to ESC Spectrum’s FAT guidance.

From checklist to controlled workflow

Digital protocol management is the backbone. The software should let your team build FAT templates around real machine functions, not generic pass-fail boxes. For a semi-automated assembly station, that might include sensor state changes, recipe handling, alarm behavior, reset logic, and operator prompts. For an integrated controls package, it may extend into communications, security settings, and dashboard verification.

Real-time deviation tracking is where many projects either stay under control or drift. During FAT, issues need ownership, priority, and closure logic. If a system logs a failed step but doesn’t support disciplined deviation handling, people still end up maintaining a separate punch list. That defeats the point.

A useful platform also handles multimedia evidence capture well. Controls FATs often need HMI screenshots, audit-trail exports, instrument records, video of a sequence, or a photo of the as-built hardware condition. If those files can’t be linked directly to the test step, retrieval becomes painful when quality asks for evidence later.

Here’s where teams often underestimate the payoff:

| Capability | What it should do | Why it matters on the floor |

|---|---|---|

| Protocol control | Maintain current approved test steps | Prevents test drift and version confusion |

| Deviation tracking | Link failures to actions and disposition | Keeps open issues visible before shipment |

| Evidence capture | Attach photos, files, and screenshots in context | Saves time during review and audit prep |

| Report generation | Build FAT records from completed execution data | Reduces manual report cleanup |

| E-signatures and audit trail | Record who did what and when | Supports accountability and compliance |

Evidence that stands up later

Automated reporting matters because FAT records are rarely used only once. They resurface during SAT, troubleshooting, quality review, change control, and internal debate about what was tested. If report generation is clumsy, teams delay it, and delayed reporting creates memory gaps.

Later in the process, visual walkthroughs can also help align internal teams and suppliers on what good FAT execution looks like.

Electronic signatures and audit trails are the features many buyers relegate to the IT checklist. In practice, they are operational tools. When a step was executed, a result changed, or an exception was approved, the record should show exactly who did it and when. That removes the ambiguity that causes arguments during release review.

What doesn’t work is buying software that only digitizes paper. If the system can store a checklist but can’t control revisions, route deviations, or build useful evidence packages, you’ve paid for a more expensive clipboard.

Navigating GMP Compliance and Validation

In regulated manufacturing, speed isn’t enough. The record has to be defensible. A FAT can feel complete to the project team and still fall short for quality if the software doesn’t support traceability, controlled approvals, and reliable evidence capture.

That’s why GMP-aware teams care about more than convenience. In these environments, systems validated with a requirements traceability matrix and reliable FAT software achieve a first-pass FAT yield greater than 95% and reduce site re-testing by 40-60%, according to Sarom Global’s FAT and SAT protocol guidance.

What auditors and quality teams actually need

Quality doesn’t need a pretty dashboard. It needs objective evidence. In practice, that means the FAT record should connect the URS, functional specification, test step, result, deviation, and approval history without manual stitching. If a reviewer asks why a control function was accepted, the answer should already be in the system.

For manufacturers working under regulated expectations, understanding what GMP means in manufacturing operations helps frame the software decision correctly. A generic digital form tool may collect signatures, but that doesn’t make it a validation-ready FAT platform.

The strongest systems support:

- Requirements traceability: Every critical function maps back to an approved requirement.

- Controlled user access: Testers, reviewers, and approvers don’t all need the same permissions.

- Immutable audit history: Record changes are visible, attributable, and reviewable.

- Structured exception handling: Deviations are resolved through process, not hallway agreement.

In a regulated startup, undocumented testing is often treated the same as unperformed testing.

Where digital tools help and where they don’t

Software helps most when the process is already disciplined. It can enforce sequence, preserve evidence, and reduce transcription mistakes. It can’t fix weak specifications, vague acceptance criteria, or a team that treats FAT as a formality.

A common mistake is assuming any tablet-based checklist supports compliance. It doesn’t. If the tool can’t show revision control, approval history, or requirement linkage, quality teams will still need side systems to close the gap. That adds effort and introduces risk.

The practical standard is simple. If your FAT package may need to support release decisions, validation review, or audit response, choose software that behaves like part of the quality system, not just part of the project file.

Choosing the Right FAT Software Vendor

A plant usually feels the cost of a weak FAT software decision on the second or third project, not in the demo. The first custom fixture gets through with extra spreadsheets and email approvals. Then a second supplier uses a different format, an integrated cell needs clearer evidence, and your team starts rebuilding records by hand to close the gaps.

Vendor selection should start with the equipment you buy. Small and mid-sized manufacturers rarely run only one type of project. The same site may add a semi-automated workstation this quarter, a vision fixture next quarter, and a higher-risk packaging or assembly cell after that. FAT software has to support that range without forcing enterprise-level overhead onto simple jobs.

That trade-off matters. A platform built around large capital programs can bury a small project under too many fields, roles, and review steps. A lightweight checklist app creates the opposite problem. It may work for a benchtop fixture, then fall apart when you need controlled retests, exception tracking, or a clean turnover package for quality and maintenance.

The better vendors can explain how their software handles FAT by level of risk and complexity. They should be comfortable showing a simple workflow for mechanical checks and I/O verification, then a more structured path for functional testing, recipe verification, alarm challenges, and document approval on a larger system. If every project has to fit one heavy process, adoption will slip. If every project stays too loose, review time and project risk go up.

Vendor fit also depends on your supply base. Plants that work with a mix of OEMs, panel shops, and integrators need a tool outside parties will use. That is one reason FAT software often becomes part of the broader automation manufacturer selection process. If one machine builder can work inside your FAT standard and another keeps exporting PDFs and side notes, your internal team absorbs the cleanup.

Ask for a live walkthrough tied to a real project type from your plant. A good demo is not a tour of dashboards. It is a test execution example with a failed step, attached evidence, review comments, re-test, approval, and final report output. That sequence shows whether the software reduces project risk or just changes where the admin work happens.

Use a review checklist that exposes the practical gaps early:

| Evaluation Criterion | What to Ask | Why It Matters for ROI |

|---|---|---|

| Fit for project mix | Can the software handle both small fixtures and larger integrated systems without extra admin burden? | Keeps the tool usable across the projects that actually drive plant improvements |

| Supplier usability | How do outside builders log in, execute tests, and respond to failures? | Supplier resistance turns digital FAT into an internal rework exercise |

| Record output | Can the platform produce a clean FAT package with test results, evidence, approvals, and exceptions? | Saves engineering and quality time at turnover |

| Change control | How are revisions to protocols, test steps, and retests managed? | Prevents confusion over which version was executed |

| System fit | Can data move into your document control or quality workflow without manual re-entry? | Cuts duplicate work and lowers transcription risk |

| Validation support | What documents and intended-use guidance are available for regulated projects? | Reduces the burden on quality teams when a project needs tighter control |

One more test helps separate serious vendors from polished sales teams. Ask them to show what happens when a test fails late in FAT, after several steps are already approved. If they cannot show clear exception handling, controlled retest, and a final report that tells the story cleanly, your engineers will be stitching that story together under schedule pressure.

Implementation Best Practices for Maximum ROI

Buying the platform is the easy part. The return comes from making it part of the project workflow before the next machine is on the dock.

A thorough FAT that validates control software and instrument calibration can reduce SAT nonconformities by 50-70%, according to YMC America’s FAT services guidance. That payoff doesn’t happen automatically. It depends on how the software is set up and how consistently teams use it.

Build the process before you scale it

Start with a template library. Most plants repeat equipment patterns even when each project feels custom. You might have recurring FAT elements for fixtures, operator stations, servo assemblies, vision checks, recipe-driven machines, or packaging cells. Build templates around those patterns so the first draft isn’t reinvented every time.

Then define what “good evidence” means. Some teams require screenshots for every alarm and recipe check. Others only capture exceptions. Neither approach is universally right. What matters is consistency. If one vendor attaches files step-by-step and another uploads a loose folder at the end, your records won’t review the same way.

A practical rollout usually works best when you:

- Standardize acceptance criteria: Avoid vague language like “works as expected.”

- Onboard suppliers early: Don’t introduce the software the week before witness FAT.

- Assign review roles clearly: Engineering, quality, and operations should know who approves what.

- Pilot on one project type first: Prove the workflow on a manageable scope before wider rollout.

Make FAT data useful after shipment

The second payoff comes when FAT records inform SAT instead of sitting in an archive. If the FAT shows what was already verified at the vendor site, your site team can focus on utilities, installation impacts, field interfaces, and integration conditions rather than repeating basic checks without purpose.

That only works if the data structure is clean. SAT teams need searchable records, closed deviations, and a clear picture of what remains open by design. Otherwise, they’ll ignore the FAT package and rebuild the validation effort from scratch.

The long-term benefit is operational, not just procedural. Once teams can compare FAT results across projects, they start seeing patterns. Certain alarms fail repeatedly. Certain vendors submit weak documentation. Certain machine types need stronger simulation before witness day. That’s where factory acceptance test software stops being a test tool and starts becoming an engineering feedback loop.

Common Pitfalls and How to Avoid Them

A plant buys FAT software after one painful project, then uses it exactly like the old shared drive. PDFs go in. Signatures get collected. The team still argues over test intent, vendors still keep evidence in side files, and SAT still starts with someone asking which version is current. That is a process problem wearing new software.

One common failure is buying for document storage instead of test execution. Good FAT software should control versioning, guide the technician through each step, capture evidence at the point of test, and route exceptions to the right reviewer. If it only stores finished files, it will not reduce risk, and it will not save much time.

Late supplier adoption creates the next problem. If a machine builder sees your FAT workflow a few days before witness testing, they will default to what they know. That usually means screenshots in local folders, punch items tracked in Excel, and missing context around failures. For small and mid-sized manufacturers, this hurts more than people expect, because one delayed semi-automated station can hold up a line improvement that was supposed to pay back this quarter.

Poor protocol writing causes a different kind of waste. A step like "verify alarm works" leaves too much open to interpretation. Write what triggers the alarm, what the HMI should display, what output or interlock should change state, and what counts as pass or fail. Clear steps shorten review time and reduce the back-and-forth between engineering, quality, and the OEM.

Another mistake is applying the same FAT depth to every asset. A custom fixture, a semi-automated workstation, and a GMP-critical skidded system do not need the same level of testing or documentation. If you over-test simple equipment, engineering hours disappear into paperwork. If you under-test controls-heavy systems, the cost shows up later in commissioning delays, deviation handling, and production downtime.

Use a risk-based scope instead:

- Low-complexity fixtures and tooling: Verify the build, safety basics, and key functional checks in a controlled but lean protocol.

- Semi-automated cells: Add sequence testing, alarm and interlock checks, recipe or parameter verification, and clearer evidence capture.

- Integrated or compliance-heavy systems: Require traceability by requirement, formal exception handling, review approvals, and records that can stand up to quality review.

The return comes from applying discipline where it pays back. That matters for large capital projects, but it matters just as much for the smaller projects that often drive the best ROI in real plants. A well-scoped FAT package for a fixture, test stand, or semi-automated station can prevent weeks of rework without forcing the team into validation overhead that the project does not need.

If you're planning a new fixture, semi-automated station, or a broader production upgrade, System Engineering & Automation helps manufacturers choose the right level of automation and validation for the job. SEA builds practical manufacturing solutions that improve throughput, quality, and compliance without overcomplicating the project, and supports the full path from concept through commissioning.